Written By Scott Habeeb, Salem High School

I have to confess something: I care way too much about Fantasy Football. Throughout the fall, I’m constantly checking my Yahoo Fantasy app, plotting my next waiver wire strategy, or looking online for updates about player injuries. I am addicted to Fantasy Football.

This year I was the champion of my Fantasy Football league. Actually, that’s an understatement. I smashed the competition!

Players in our league can win in 3 categories:

- Regular Season Champ: After 13 weeks, this team has the best win/loss record and qualifies for the playoffs as the top seed.

- Playoff Champ: This team makes the playoffs and then wins the 3 week end-of-season tournament.

- Total Points Champ: This team scores the most points over the course of the 16 week season.

As this year’s regular season, playoff, and total points champ, my team was the undisputed champion of the league.

My goal isn’t to brag about my prowess at Fantasy Football. (Although I have to admit I enjoy doing so…) But for this post to help educators, I first need you to understand the following: My season was the best season of anyone in my league and would be considered a dream season for anybody who plays Fantasy Football.

Then I got an email from Yahoo Fantasy Sports, our league’s Fantasy Football Platform.

A Surprise for the Champ: My Season Story

I love Yahoo’s mobile app, their player updates, and the outstanding data analysis they provide to help players make decisions. So when I received an email from Yahoo with a link to the my “Season Story” I was excited to read their analysis of my successful year.

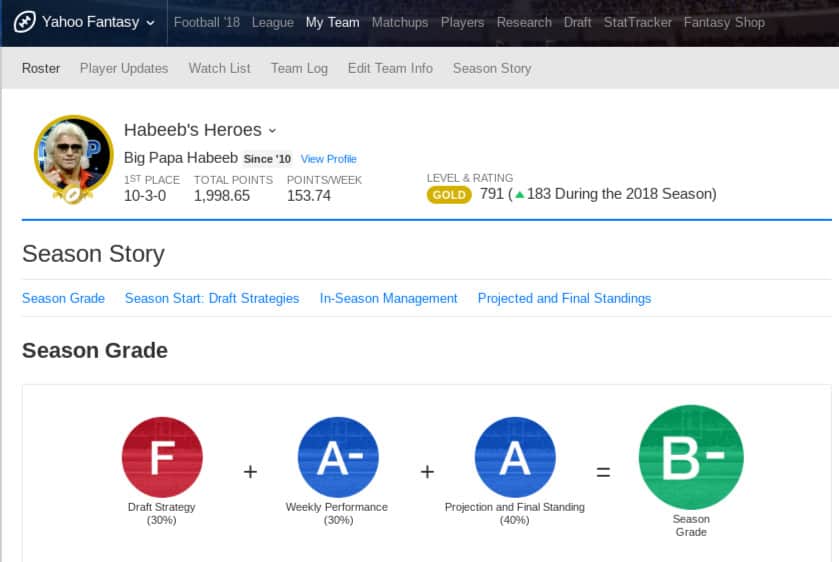

It turned out that by “Season Story” Yahoo meant it was sharing with me an overall grade for, or assessment of, my season. Imagine my surprise when I learned Yahoo assigned a B- as the grade for my dream season! How could this be?

Grading: Yahoo-style

Much like what happens in the traditional American classroom, Yahoo had used a formula to determine my final grade. The formula averaged together the following 3 key data points:

1. Projection and Final Standing: 40% of the Season Grade

This compares where I ended my season with where at the beginning of the season I was projected to finish. Yahoo graded me at an A level, which makes sense. After all, I was the champion in all three of our league’s categories. Plus, I had been projected to finish 14th out of 16 teams. With this combination of overall achievement and growth, if I wasn’t an A in Projection and Final Standing, who could be?

2. Weekly Performance: 30% of the Season Grade

Yahoo averaged together each week’s performance to get this score. Yahoo graded me at an A- level. An A- makes sense. I could even agree with a B+. Some weeks my team was amazing. Other weeks it was good. But it was never bad.

3. Draft Strategy: 30% of the Season Grade

Yahoo graded me at an F level. In other words, at the beginning of the season, Yahoo didn’t think I had selected a good team. Was Yahoo correct that I picked the wrong players? That might have been a logical prediction early on. Perhaps I didn’t start the season on a strong note. Yahoo already noted that with my Projection and Final Standing category. But the evaluation of my season’s start ended up being the reason my grade was a B- at the end of the season.

Honestly, this grading methodology makes no sense. The purpose of the season grade should be to communicate how successful the season was. With that in mind, the only grade that should have mattered was the summative score of A representing my Projection and Final Standing.

That score shows that I achieved at the highest possible level and that I grew beyond expectations. Averaging together the other data points only detracted from the accuracy of what Yahoo was trying to communicate.

Comparing Yahoo and Schools

Similarly, the common and very traditional practice of averaging together different types of student data taken at various points in time throughout a school year detracts from the ability of a student’s final grade to accurately communicate mastery of content.

Let’s compare Yahoo’s grading language to the language we use in schools:

1. Projection and Final Standing = Summative Assessment and Student Growth

Where a student ends up when all is said and done is the summative assessment of a student’s level of content mastery, and student growth refers to much they grow from start to finish.

2. Weekly Performance = Formative Assessment

All the things students do along the way - the practice that helps them learn, the homework, the classwork, the quizzes, the activities - these are formative assessments. Formative assessment’s purpose is to serve as practice and to provide feedback that helps student both grow and achieve summative mastery.

3. Draft Strategy = Pre-Assessment

Where a student is before the learning occurs is the pre-assessment. Pre-assessment data helps us know what formative assessments will be necessary to help individual students grow to guide each of them toward summative assessment mastery.

Lessons from Yahoo for Educators

I believe that by studying Yahoo’s methodology educators will notice the weakness inherent in our own widely-accepted traditional grading and assessment practices. Specifically, we can be reminded that:

- Averaging past digressions with future successes falsifies grades.Pre-assessment data, or data that represents where a student was early in the learning process, should never be averaged with summative assessment data. The early data is useful to guide students toward growth and mastery, but it should never be held against a student by being part of a grade calculation. Otherwise, we run the risk of having the Draft Strategy dictate the Season Story despite the more accurate picture painted by the Projection and Final Standing.

- Formative assessment is useful for increasing learning but less so for determining a grade.Knowing my weekly performance enabled me to make decisions to help my team improve, but my team not always performing at an A level does not detract from my team mastering its goals and growing appropriately. If, as a result of formative assessment feedback, a student makes learning decisions that brings her closer to summative mastery, why would we then base the score that represents the summative mastery on the formative feedback?

- Formative assessment data loses value once we have summative data.Why did Yahoo care about my Draft Strategy and Weekly Performance once it knew my Final Standing? It’s possible that formative assessment data could be used as additional evidence of learning if we are concerned that the summative assessment doesn’t paint a complete picture, but, in general, once mastery is demonstrated, the fact the student wasn’t always at that same level of mastery becomes irrelevant.

- It’s impossible to create the perfect formula to measure all student learning.Yahoo chose to use a 30/30/40 formula. Why? Some schools say Homework should count 10%. Why? Some districts say exams must count 25% of a grade. Why? Some teachers make formative assessment count 40%. Why? Some schools average semesters, some average quarters, and some average 6 grading periods. Why? There is an inherent problem with averaging. We make up formulas because they sound nice and add up to 100%, but there is no way to definitive formula for determining learning or growth. Averaging points in time, chunks of time, or data taken over time will always mask accuracy. Yet educators, like Yahoo, feel the need to try to find a formula the justify grades.

- Using formulas to determine grades inherently leads to a focus on earning points instead of on learning content.In the case of Yahoo, they didn’t advertise their formula in advance. Now that I know this formula, I still don’t anticipate changing my strategy in the future because, frankly, I don’t care about my season grade. I care about winning. But students and parents are naturally going to care about grades because of the doors that grades on transcripts open or close. As long as there are final grades there will always an interest in getting good grades. When grades are the result of a formula it naturally leads to a quest for numerator points, something that may not be connected to learning. When this is the case, students ask for opportunities to earn points. When grades are a true reflection of content mastery, a focus on learning is more likely to result. In these situations, students ask for opportunities to demonstrate learning.

A Call to Action

It’s time for schools to stop being like Yahoo Fantasy Sports when it comes to our assessment and grading practices. My Season Story grade should be an accurate reflection of where my season ended up. Along the way, I need the descriptive feedback that will enable me to make informed growth-based decisions. Students need final grades that are accurate reflections of where they end up in the learning process. Along the way, they need appropriate descriptive feedback so they can make informed growth-based decisions, as well.

Traditional grading is rooted in decades of practice, and shifting the course of our institutional inertia to focus more appropriately on learning rather than grading will take effort and time. Schools must choose to embark on Assessment Journeys that lead to accurate feedback and descriptions of learning, mastery of content, and student growth.

Let’s get started today!

FariaPD supports teachers and leaders around the world with hands-on, active and creative professional development experiences. Join one of our online or in-person professional development events, each designed to support the unique goals of your school or district. FariaPD is part of Faria Education Group, an international education company that provides services and systems for schools around the world including ManageBac, a learning platform for IB schools; OpenApply, online admissions management; SchoolsBuddy, for Activities Management, Payments and More; and AtlasNext, a tailored curriculum-first learning platform for independent and international schools.

Contributing Author:

Scott Habeeb began his teaching career at Salem High School (Salem, VA) in 1997, where he taught Modern World History. In 2004, Scott moved into the role of Assistant Principal for Curriculum and Instruction, also at Salem High School. Currently, Scott is in his 6th year serving in the role of Principal of Salem High School.

Outside of his duties at Salem High School, Scott regularly speaks at schools, school divisions, and conferences across the country. Most commonly, he speaks about Freshman Transition, Formative Assessment, Standards Based Learning, and his favorite topic, Going Beyond the Content to inspire and motivate students.

Scott is the author or co-author of several articles as well as the co-author of The Ninth Grade Opportunity: Transforming Schools from the Bottom Up.